| MDP & Environment |

Agent-Environment Interaction Loop |

|

Core cycle: observation of state → selection of action → environment transition → receipt of reward + next state |

All RL algorithms |

| MDP & Environment |

Markov Decision Process (MDP) Tuple |

|

(S, A, P, R, γ) with transition dynamics and reward function |

s,a) and R(s,a,s′)) |

| MDP & Environment |

State Transition Graph |

|

Full probabilistic transitions between discrete states |

Gridworld, Taxi, Cliff Walking |

| MDP & Environment |

Trajectory / Episode Sequence |

|

Sequence of (s₀, a₀, r₁, s₁, …, s_T) |

Monte Carlo, episodic tasks |

| MDP & Environment |

Continuous State/Action Space Visualization |

|

High-dimensional spaces (e.g., robot joints, pixel inputs) |

Continuous-control tasks (MuJoCo, PyBullet) |

| MDP & Environment |

Reward Function / Landscape |

|

Scalar reward as function of state/action |

All algorithms; especially reward shaping |

| MDP & Environment |

Discount Factor (γ) Effect |

|

How future rewards are weighted |

All discounted MDPs |

| Value & Policy |

State-Value Function V(s) |

|

Expected return from state s under policy π |

Value-based methods |

| Value & Policy |

Action-Value Function Q(s,a) |

|

Expected return from state-action pair |

Q-learning family |

| Value & Policy |

*Policy π(s) or π(a* |

|

s) |

Arrow overlays on grid (optimal policy), probability bar charts, or softmax heatmaps |

| Value & Policy |

Advantage Function A(s,a) |

|

Q(s,a) – V(s) |

A2C, PPO, SAC, TD3 |

| Value & Policy |

Optimal Value Function V / Q** |

|

Solution to Bellman optimality |

Value iteration, Q-learning |

| Dynamic Programming |

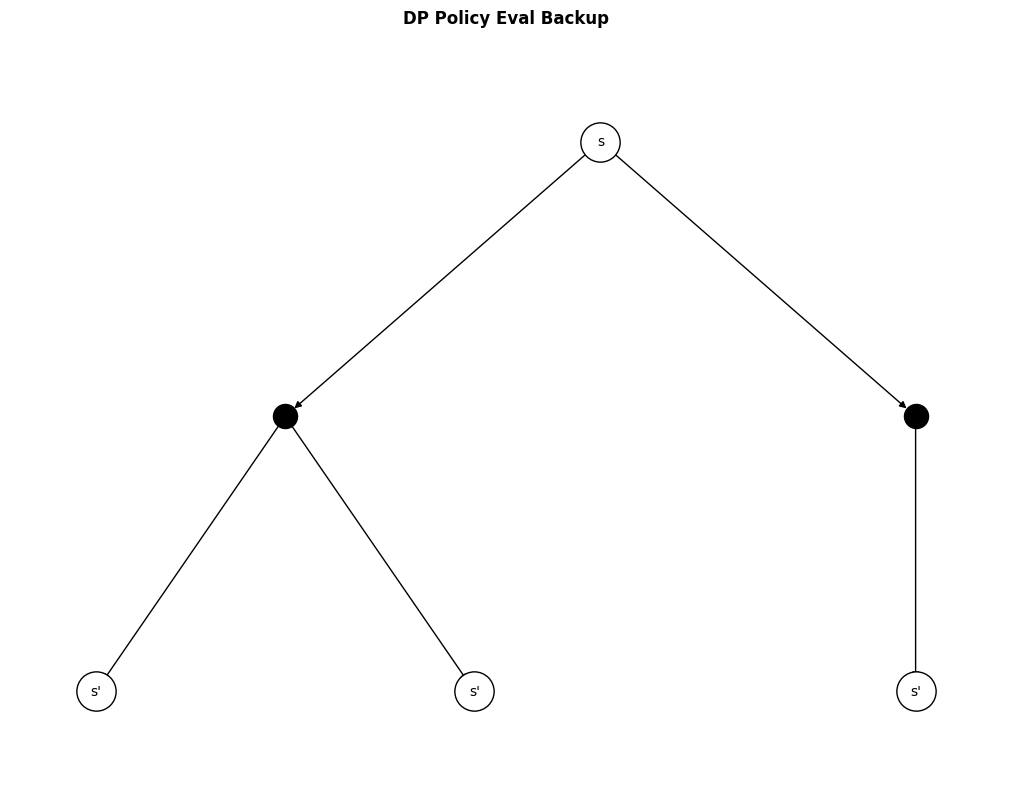

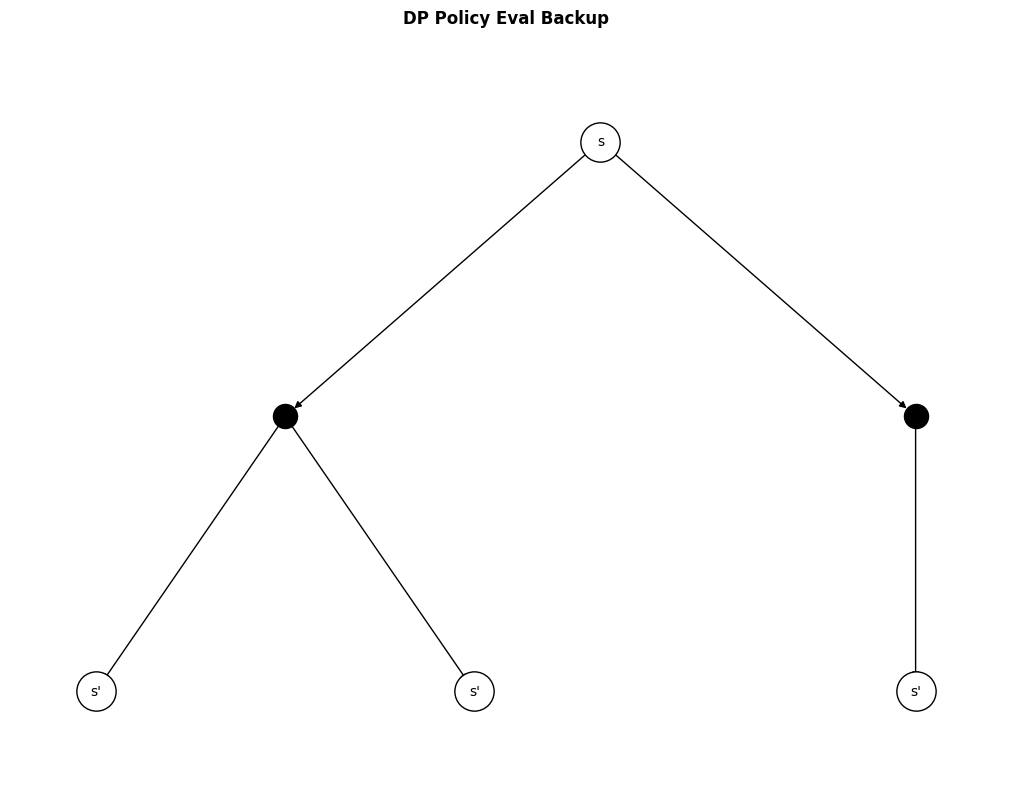

Policy Evaluation Backup |

|

Iterative update of V using Bellman expectation |

Policy iteration |

| Dynamic Programming |

Policy Improvement |

|

Greedy policy update over Q |

Policy iteration |

| Dynamic Programming |

Value Iteration Backup |

|

Update using Bellman optimality |

Value iteration |

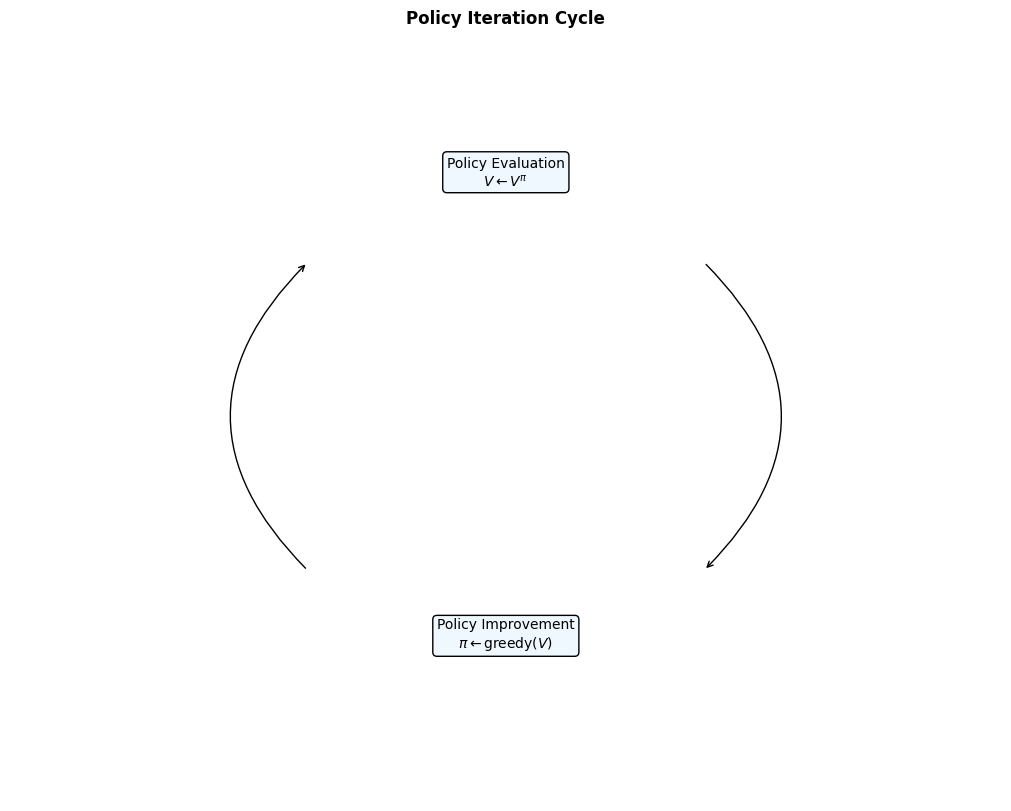

| Dynamic Programming |

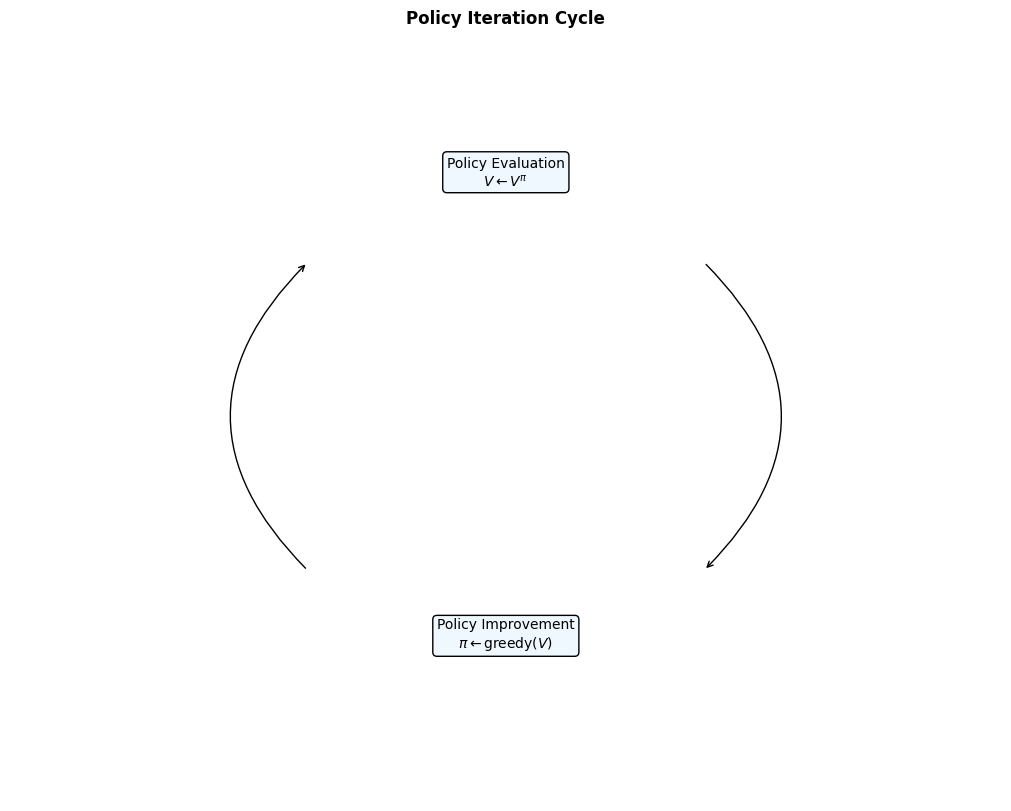

Policy Iteration Full Cycle |

|

Evaluation → Improvement loop |

Classic DP methods |

| Monte Carlo |

Monte Carlo Backup |

|

Update using full episode return G_t |

First-visit / every-visit MC |

| Monte Carlo |

Monte Carlo Tree (MCTS) |

|

Search tree with selection, expansion, simulation, backprop |

AlphaGo, AlphaZero |

| Monte Carlo |

Importance Sampling Ratio |

|

Off-policy correction ρ = π(a\ |

s) |

| Temporal Difference |

TD(0) Backup |

|

Bootstrapped update using R + γV(s′) |

TD learning |

| Temporal Difference |

Bootstrapping (general) |

|

Using estimated future value instead of full return |

All TD methods |

| Temporal Difference |

n-step TD Backup |

|

Multi-step return G_t^{(n)} |

n-step TD, TD(λ) |

| Temporal Difference |

TD(λ) & Eligibility Traces |

|

Decaying trace z_t for credit assignment |

TD(λ), SARSA(λ), Q(λ) |

| Temporal Difference |

SARSA Update |

|

On-policy TD control |

SARSA |

| Temporal Difference |

Q-Learning Update |

|

Off-policy TD control |

Q-learning, Deep Q-Network |

| Temporal Difference |

Expected SARSA |

|

Expectation over next action under policy |

Expected SARSA |

| Temporal Difference |

Double Q-Learning / Double DQN |

|

Two separate Q estimators to reduce overestimation |

Double DQN, TD3 |

| Temporal Difference |

Dueling DQN Architecture |

|

Separate streams for state value V(s) and advantage A(s,a) |

Dueling DQN |

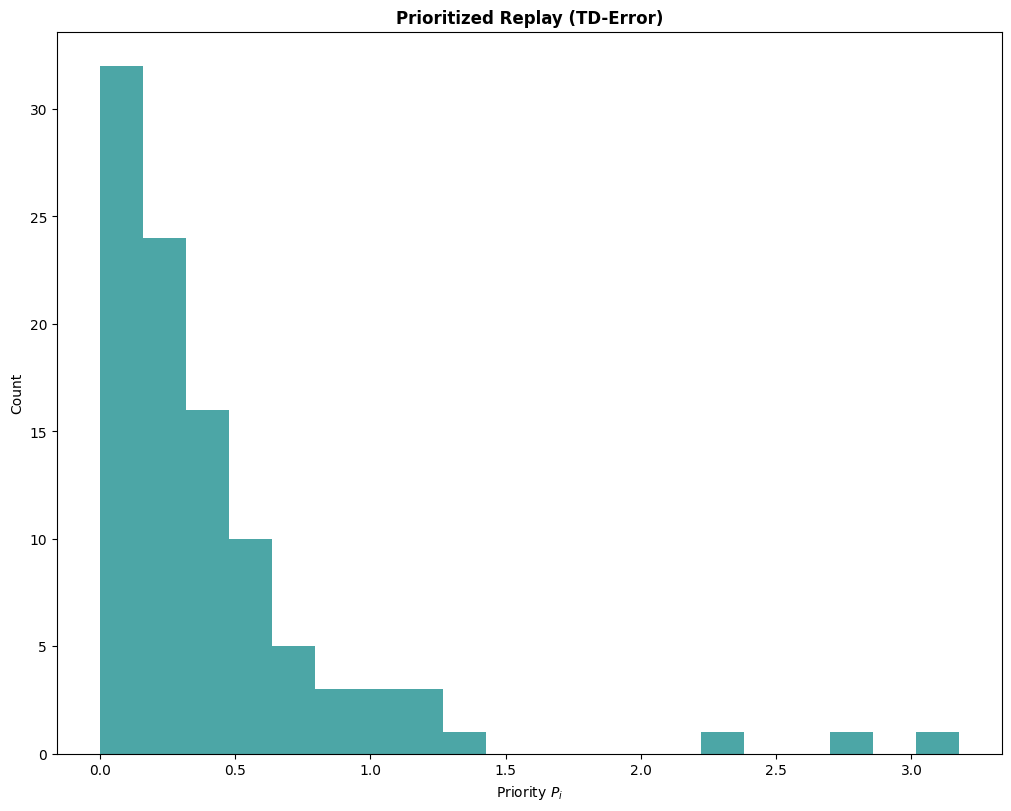

| Temporal Difference |

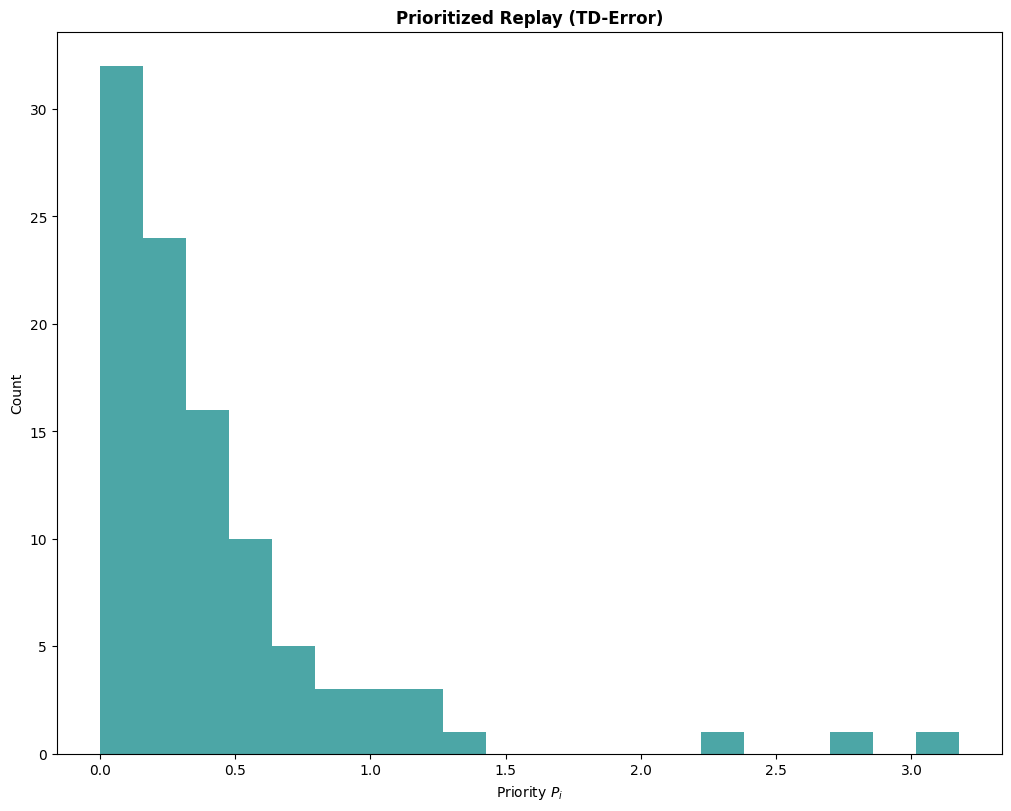

Prioritized Experience Replay |

|

Importance sampling of transitions by TD error |

Prioritized DQN, Rainbow |

| Temporal Difference |

Rainbow DQN Components |

|

All extensions combined (Double, Dueling, PER, etc.) |

Rainbow DQN |

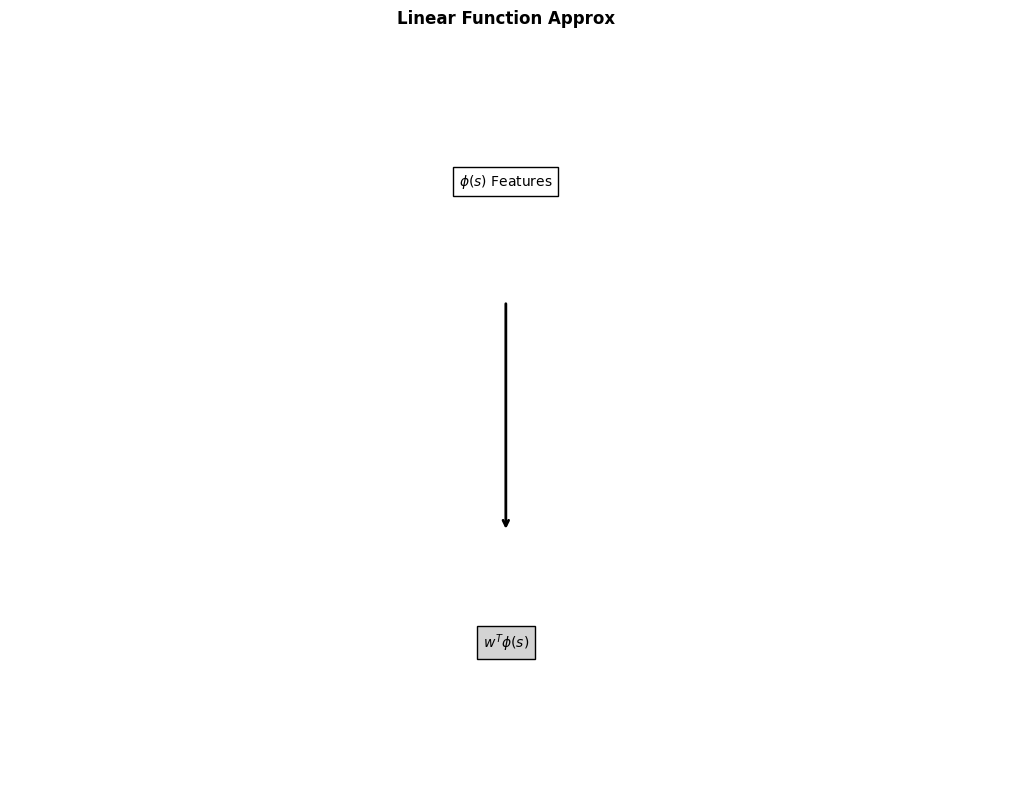

| Function Approximation |

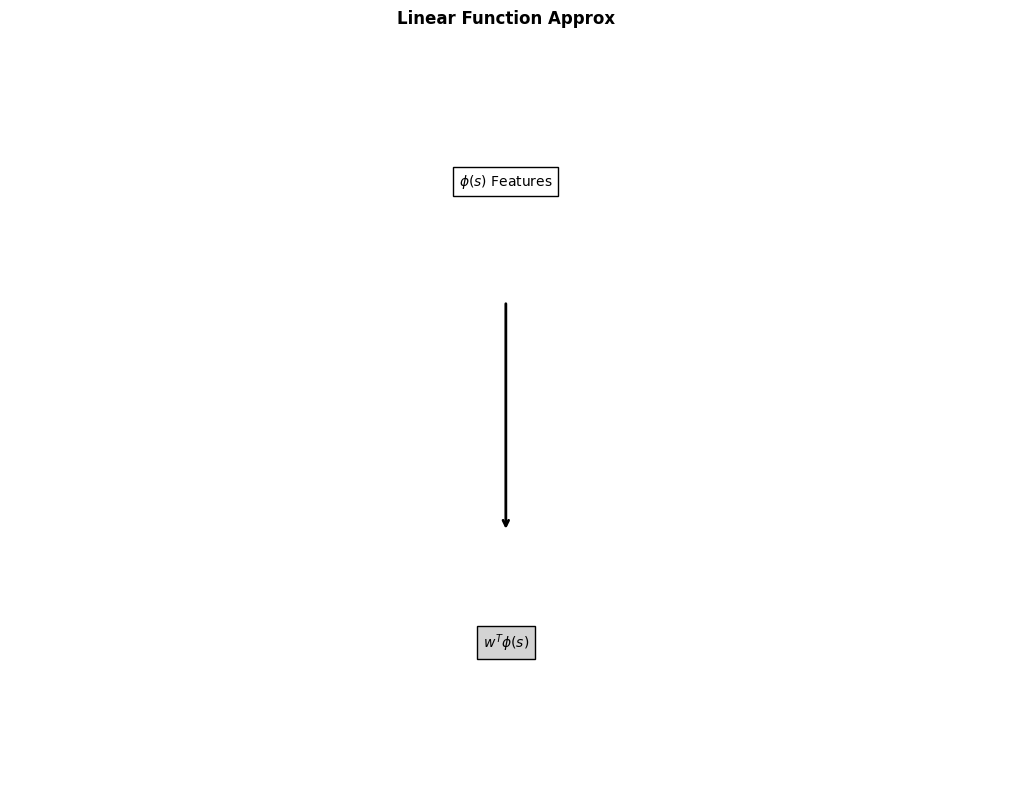

Linear Function Approximation |

|

Feature vector φ(s) → wᵀφ(s) |

Tabular → linear FA |

| Function Approximation |

Neural Network Layers (MLP, CNN, RNN, Transformer) |

|

Full deep network for value/policy |

DQN, A3C, PPO, Decision Transformer |

| Function Approximation |

Computation Graph / Backpropagation Flow |

|

Gradient flow through network |

All deep RL |

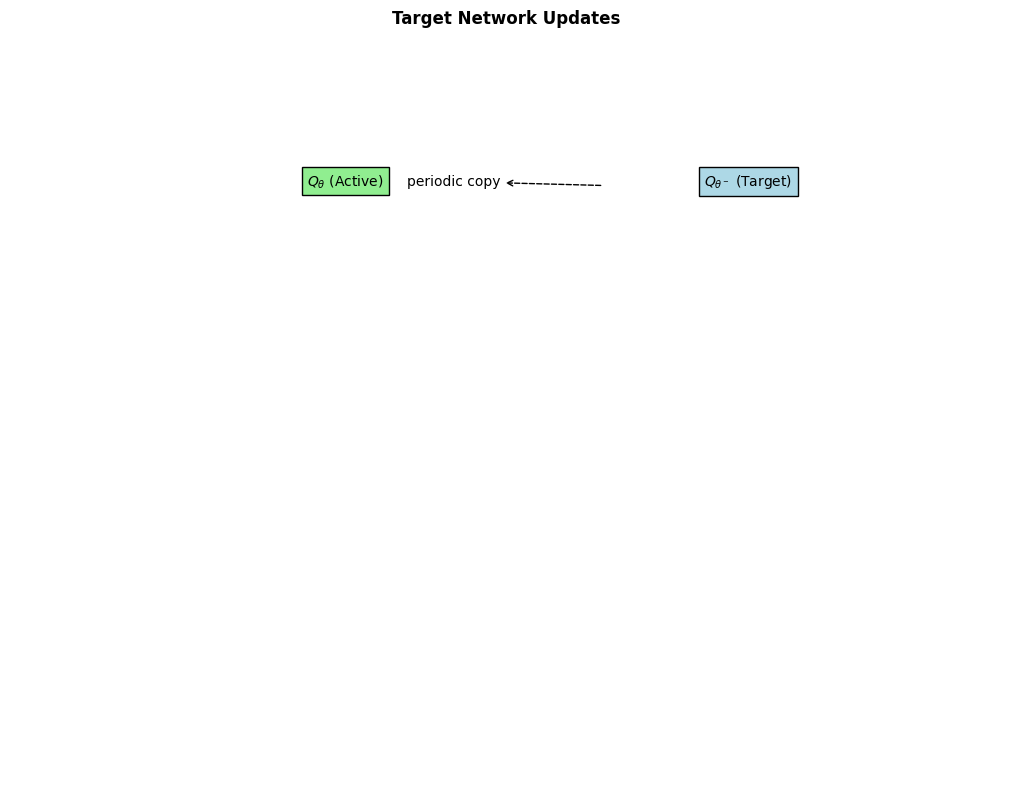

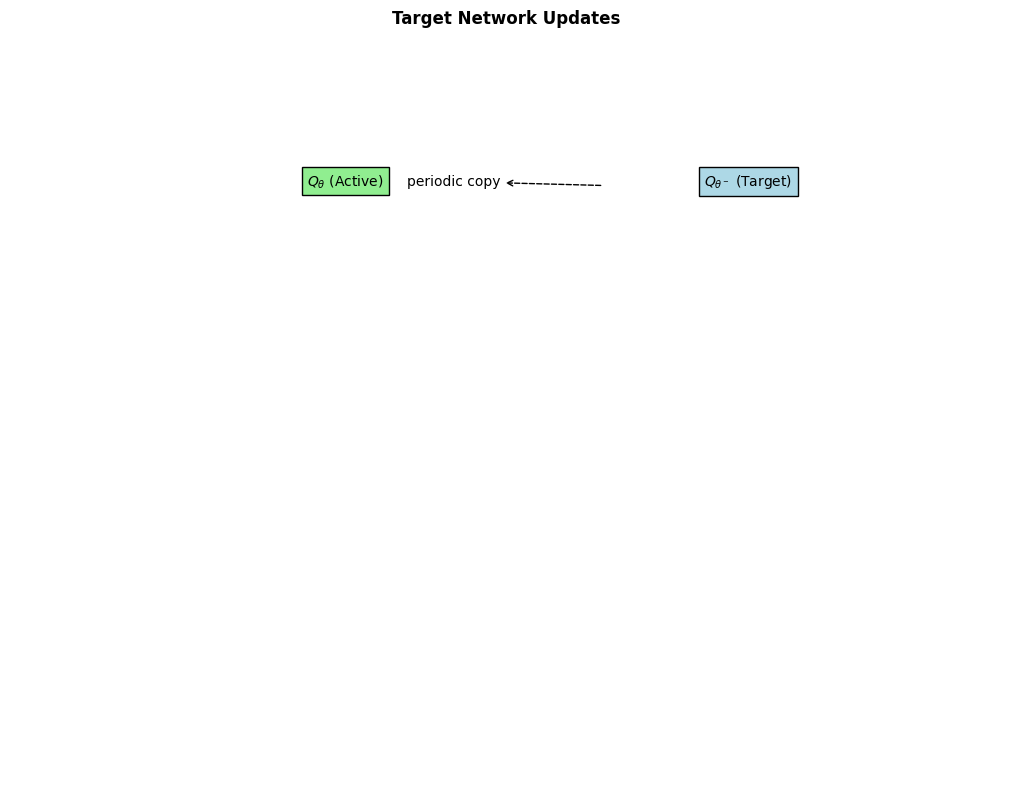

| Function Approximation |

Target Network |

|

Frozen copy of Q-network for stability |

DQN, DDQN, SAC, TD3 |

| Policy Gradients |

Policy Gradient Theorem |

|

∇_θ J(θ) = E[∇_θ log π(a\ |

Flow diagram from reward → log-prob → gradient |

| Policy Gradients |

REINFORCE Update |

|

Monte-Carlo policy gradient |

REINFORCE |

| Policy Gradients |

Baseline / Advantage Subtraction |

|

Subtract b(s) to reduce variance |

All modern PG |

| Policy Gradients |

Trust Region (TRPO) |

|

KL-divergence constraint on policy update |

TRPO |

| Policy Gradients |

Proximal Policy Optimization (PPO) |

|

Clipped surrogate objective |

PPO, PPO-Clip |

| Actor-Critic |

Actor-Critic Architecture |

|

Separate or shared actor (policy) + critic (value) networks |

A2C, A3C, SAC, TD3 |

| Actor-Critic |

Advantage Actor-Critic (A2C/A3C) |

|

Synchronous/asynchronous multi-worker |

A2C/A3C |

| Actor-Critic |

Soft Actor-Critic (SAC) |

|

Entropy-regularized policy + twin critics |

SAC |

| Actor-Critic |

Twin Delayed DDPG (TD3) |

|

Twin critics + delayed policy + target smoothing |

TD3 |

| Exploration |

ε-Greedy Strategy |

|

Probability ε of random action |

DQN family |

| Exploration |

Softmax / Boltzmann Exploration |

|

Temperature τ in softmax |

Softmax policies |

| Exploration |

Upper Confidence Bound (UCB) |

|

Optimism in face of uncertainty |

UCB1, bandits |

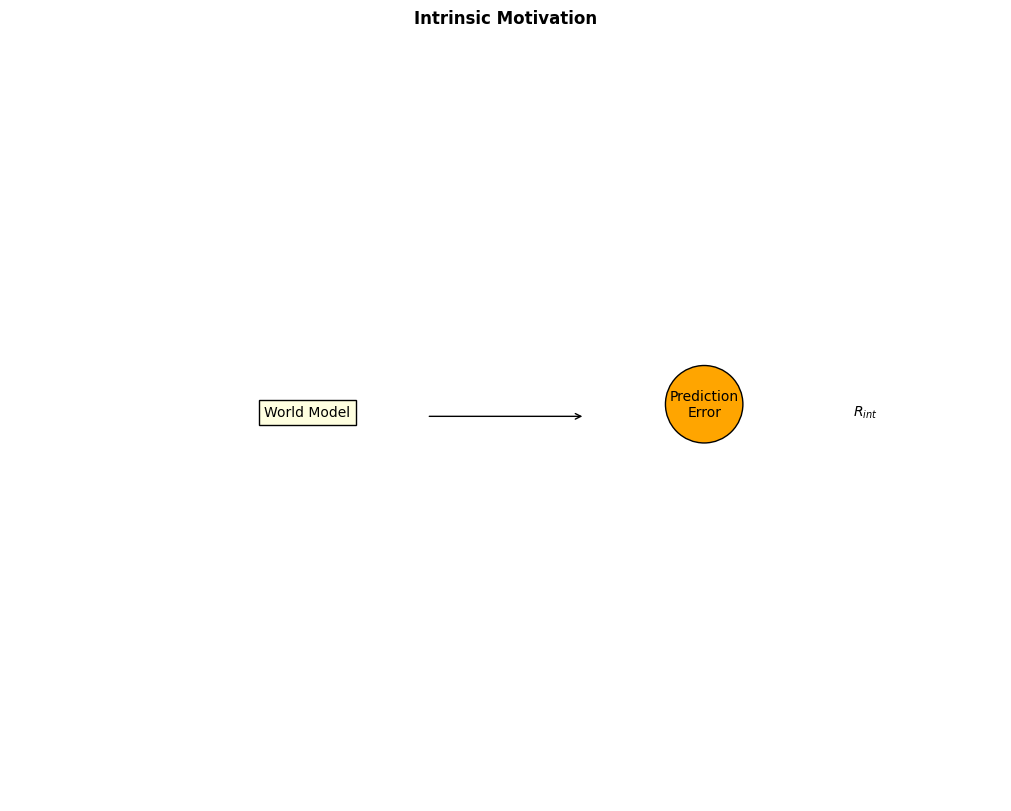

| Exploration |

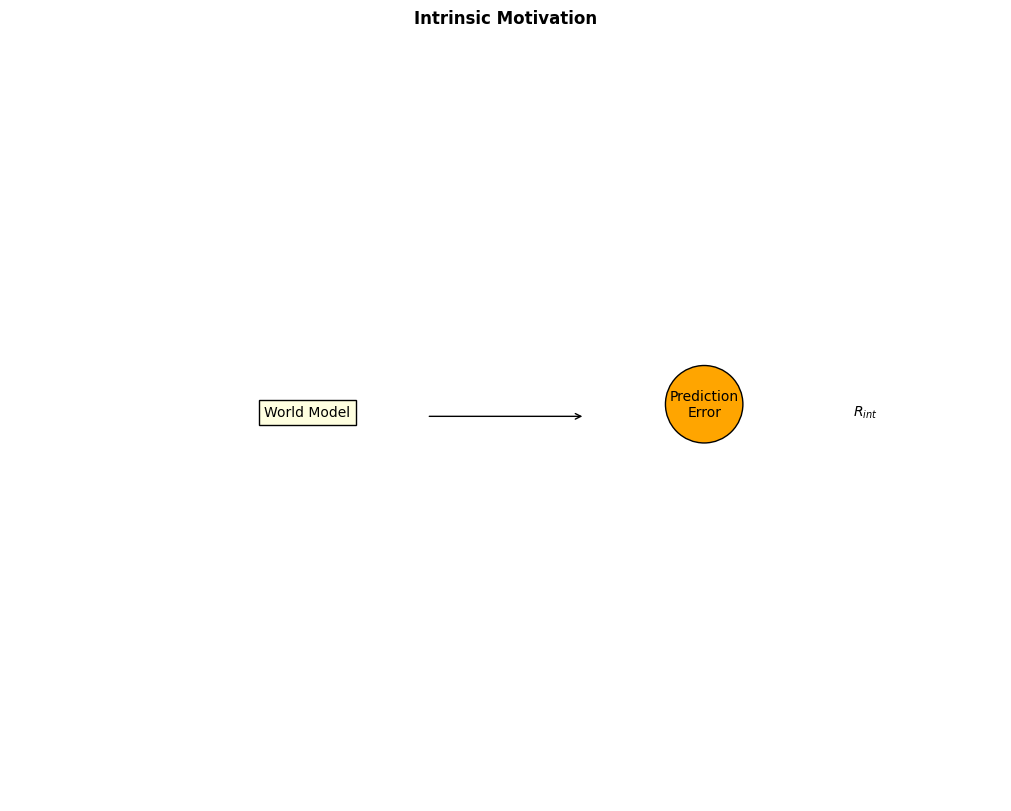

Intrinsic Motivation / Curiosity |

|

Prediction error as intrinsic reward |

ICM, RND, Curiosity-driven RL |

| Exploration |

Entropy Regularization |

|

Bonus term αH(π) |

SAC, maximum-entropy RL |

| Hierarchical RL |

Options Framework |

|

High-level policy over options (temporally extended actions) |

Option-Critic |

| Hierarchical RL |

Feudal Networks / Hierarchical Actor-Critic |

|

Manager-worker hierarchy |

Feudal RL |

| Hierarchical RL |

Skill Discovery |

|

Unsupervised emergence of reusable skills |

DIAYN, VALOR |

| Model-Based RL |

Learned Dynamics Model |

|

ˆP(s′\ |

Separate model network diagram (often RNN or transformer) |

| Model-Based RL |

Model-Based Planning |

|

Rollouts inside learned model |

MuZero, DreamerV3 |

| Model-Based RL |

Imagination-Augmented Agents (I2A) |

|

Imagination module + policy |

I2A |

| Offline RL |

Offline Dataset |

|

Fixed batch of trajectories |

BC, CQL, IQL |

| Offline RL |

Conservative Q-Learning (CQL) |

|

Penalty on out-of-distribution actions |

CQL |

| Multi-Agent RL |

Multi-Agent Interaction Graph |

|

Agents communicating or competing |

MARL, MADDPG |

| Multi-Agent RL |

Centralized Training Decentralized Execution (CTDE) |

|

Shared critic during training |

QMIX, VDN, MADDPG |

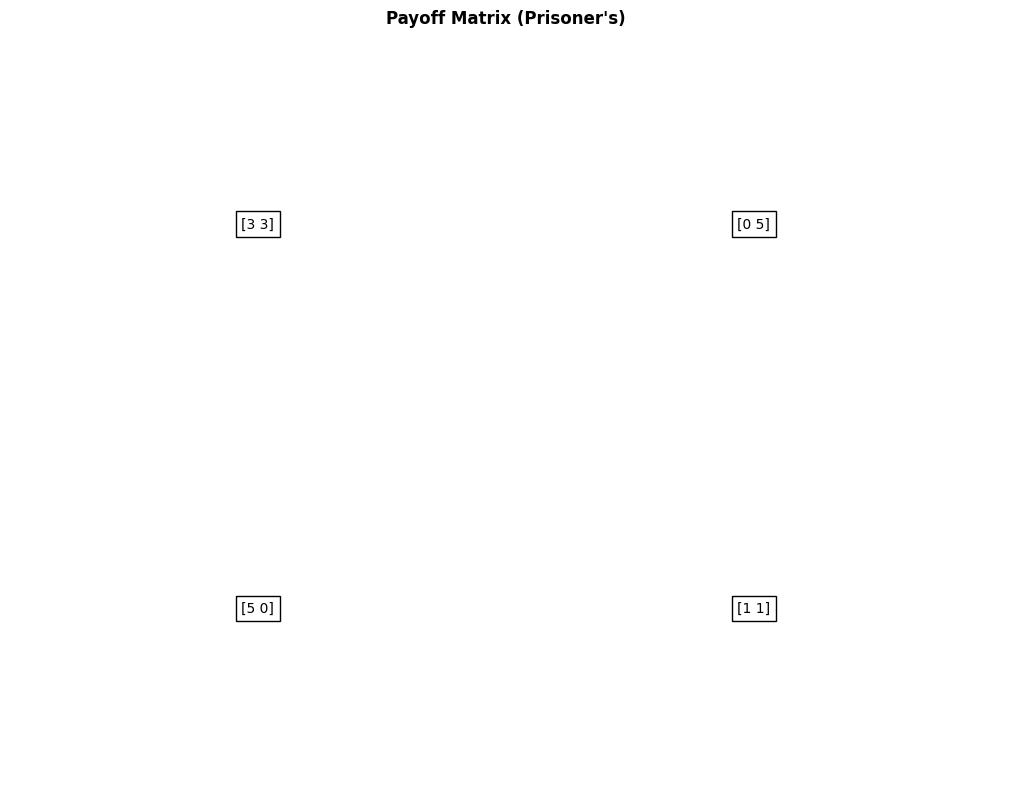

| Multi-Agent RL |

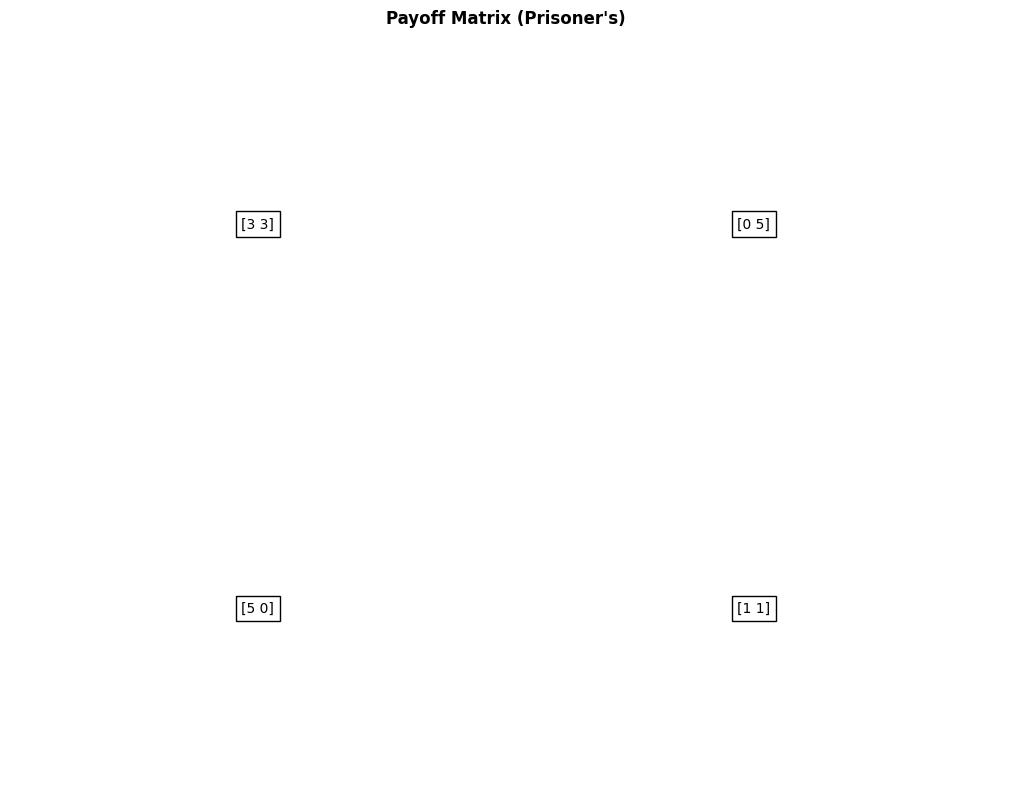

Cooperative / Competitive Payoff Matrix |

|

Joint reward for multiple agents |

Prisoner's Dilemma, multi-agent gridworlds |

| Inverse RL / IRL |

Reward Inference |

|

Infer reward from expert demonstrations |

IRL, GAIL |

| Inverse RL / IRL |

Generative Adversarial Imitation Learning (GAIL) |

|

Discriminator vs. policy generator |

GAIL, AIRL |

| Meta-RL |

Meta-RL Architecture |

|

Outer loop (meta-policy) + inner loop (task adaptation) |

MAML for RL, RL² |

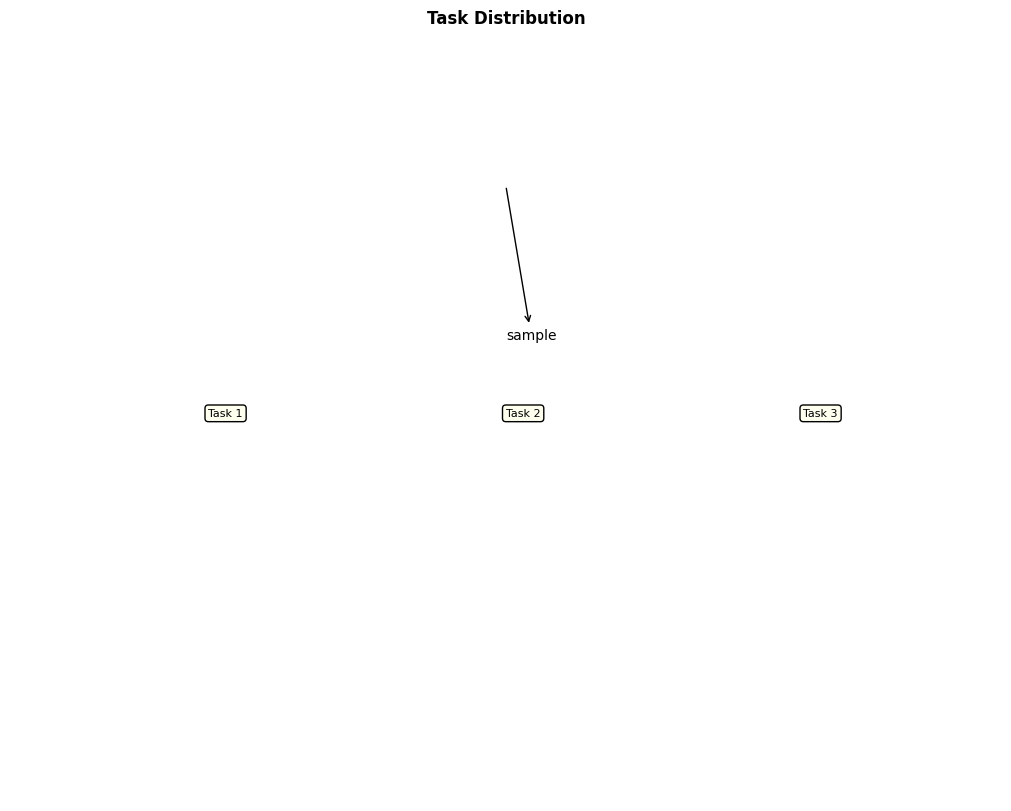

| Meta-RL |

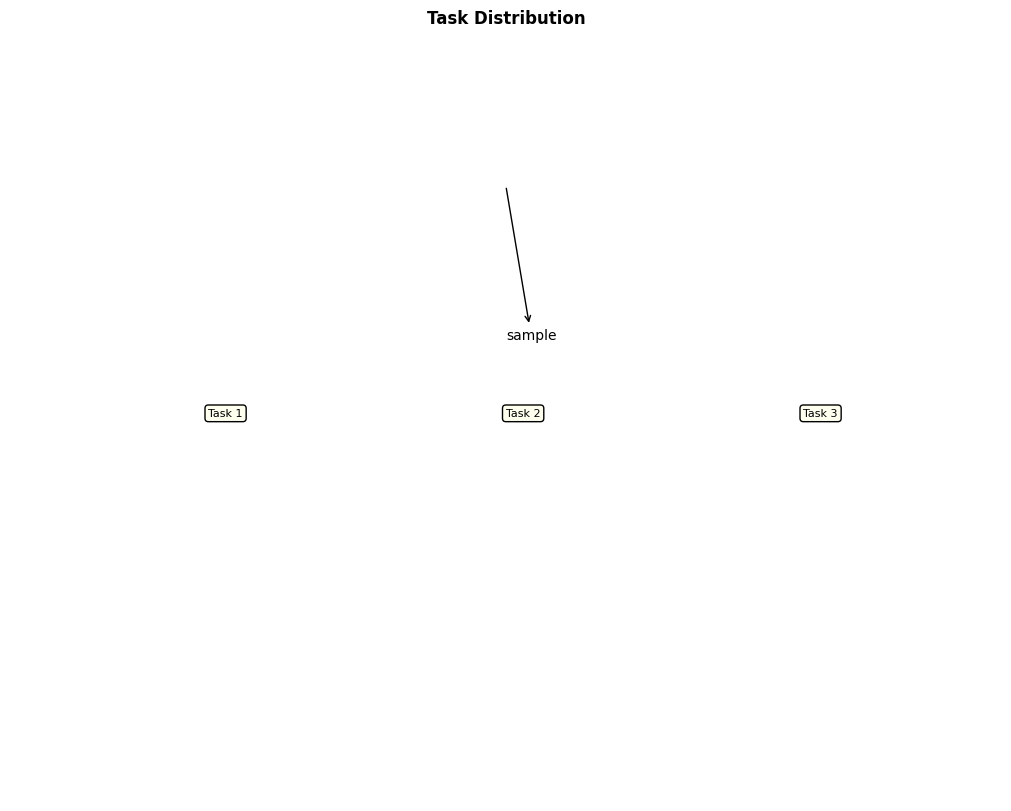

Task Distribution Visualization |

|

Multiple MDPs sampled from meta-distribution |

Meta-RL benchmarks |

| Advanced / Misc |

Experience Replay Buffer |

|

Stored (s,a,r,s′,done) tuples |

DQN and all off-policy deep RL |

| Advanced / Misc |

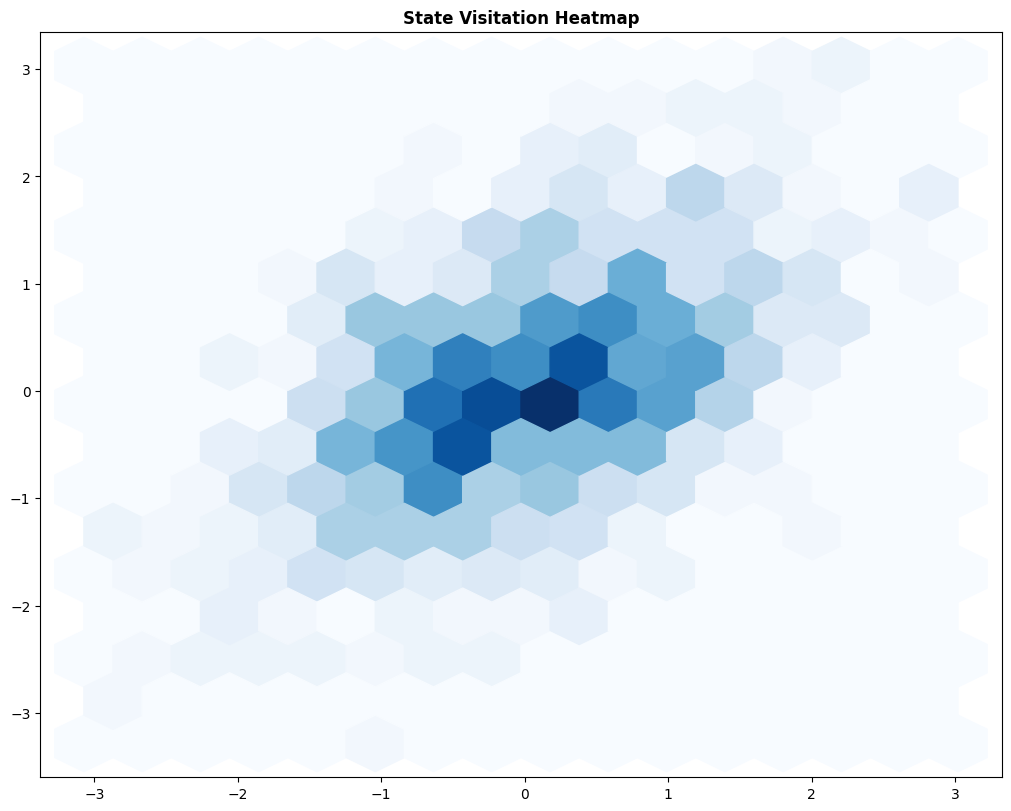

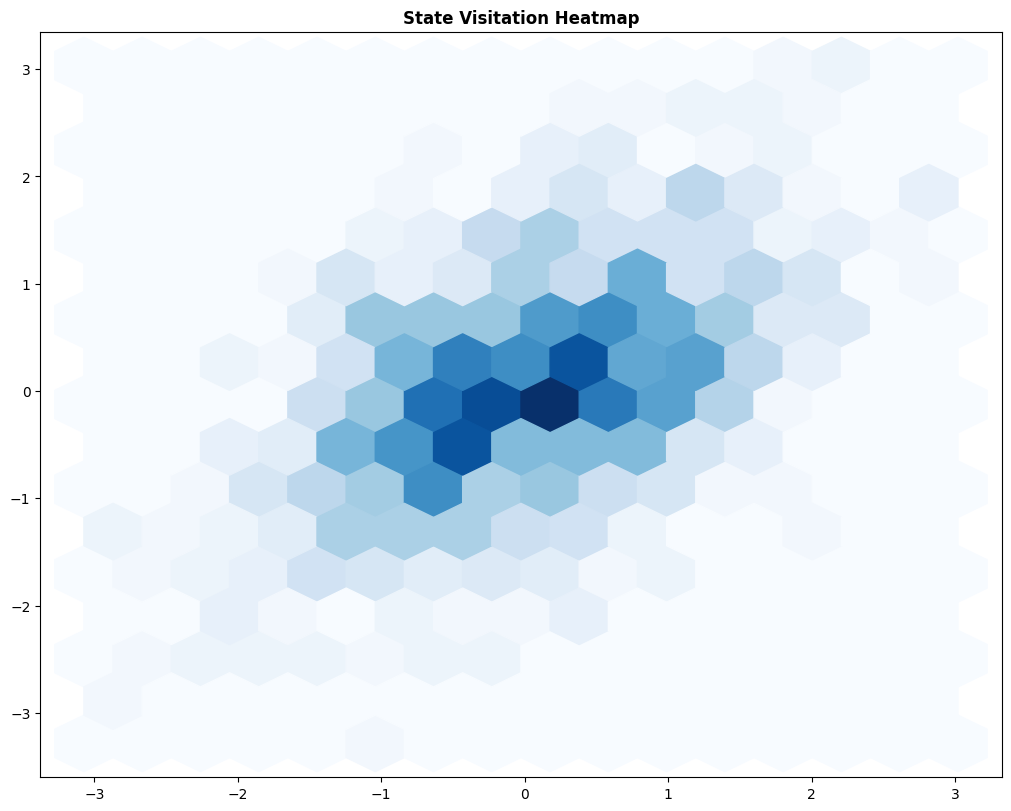

State Visitation / Occupancy Measure |

|

Frequency of visiting each state |

All algorithms (analysis) |

| Advanced / Misc |

Learning Curve |

|

Average episodic return vs. episodes / steps |

Standard performance reporting |

| Advanced / Misc |

Regret / Cumulative Regret |

|

Sub-optimality accumulated |

Bandits and online RL |

| Advanced / Misc |

Attention Mechanisms (Transformers in RL) |

|

Attention weights |

Decision Transformer, Trajectory Transformer |

| Advanced / Misc |

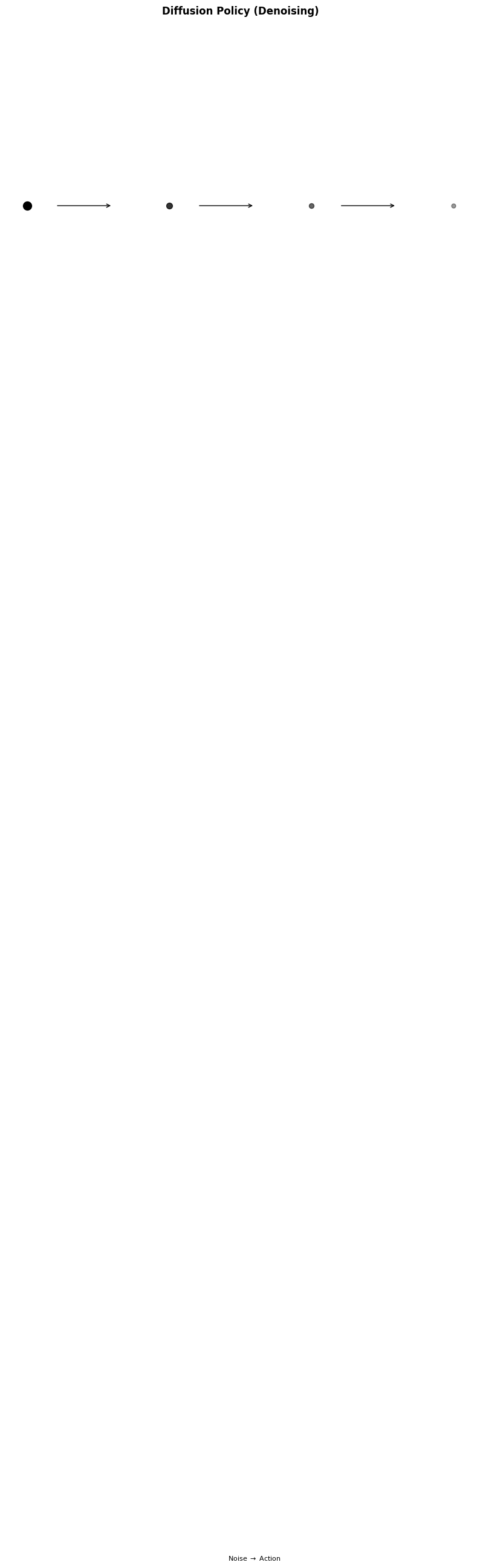

Diffusion Policy |

|

Denoising diffusion process for action generation |

Diffusion-RL policies |

| Advanced / Misc |

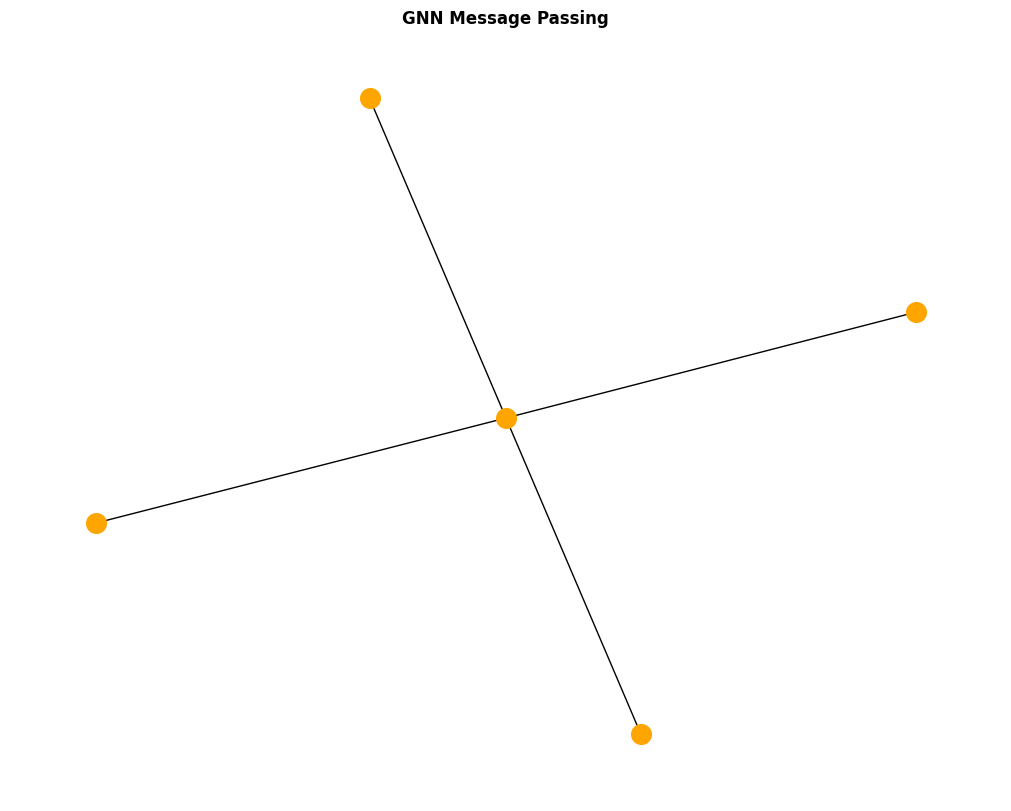

Graph Neural Networks for RL |

|

Node/edge message passing |

Graph RL, relational RL |

| Advanced / Misc |

World Model / Latent Space |

|

Encoder-decoder dynamics in latent space |

Dreamer, PlaNet |

| Advanced / Misc |

Convergence Analysis Plots |

|

Error / value change over iterations |

DP, TD, value iteration |